AWS data pipelines — payroll & HRIS

Architected AWS-based pipelines integrating Salesforce, Workday, PrismHR, Finch, and HRIS systems. Designed ODS and CDM layers for payroll, insurance, and compensation analytics. Large-scale transformations with Glue and PySpark, orchestrated with Airflow.

Portfolio accounting — Hazelcast & reconciliation

Enhanced portfolio accounting systems with distributed caching (Hazelcast) and data reconciliation frameworks. Implemented monitoring agents for production performance and consistency between cache and database systems.

Data governance & quality platform

Built enterprise Data Insight & Data Quality platform with Spark, Spark-SQL, and Scala. Automated checks for null analysis and correlation detection. Data modeling and architecture for governance initiatives.

Clinical ETL — Crunch & Hadoop

End-to-end ETL pipelines with Apache Crunch and Hadoop MapReduce. Cleansing and normalizing clinical datasets in HDFS. REST services (Beadledom/RESTEasy) for downstream systems and accessibility.

Investment bank desktop clients

Rich desktop clients with Eclipse RCP, SWT, and JFace. XML marshalling with JAXB. Requirement analysis, development, estimation, and production support for global investment bank workflows.

GCP Dataflow — Confluent to BigQuery

Kafka to BigQuery streaming pipeline with real-time ingestion and monitoring. Built for high-throughput event data with schema validation and dead-letter handling.

Hadoop-to-GCP Migration

Hadoop to GCP migration with validation and handover. Data lineage preserved, jobs rewritten for Dataproc and BigQuery. Full documentation and runbooks for operations.

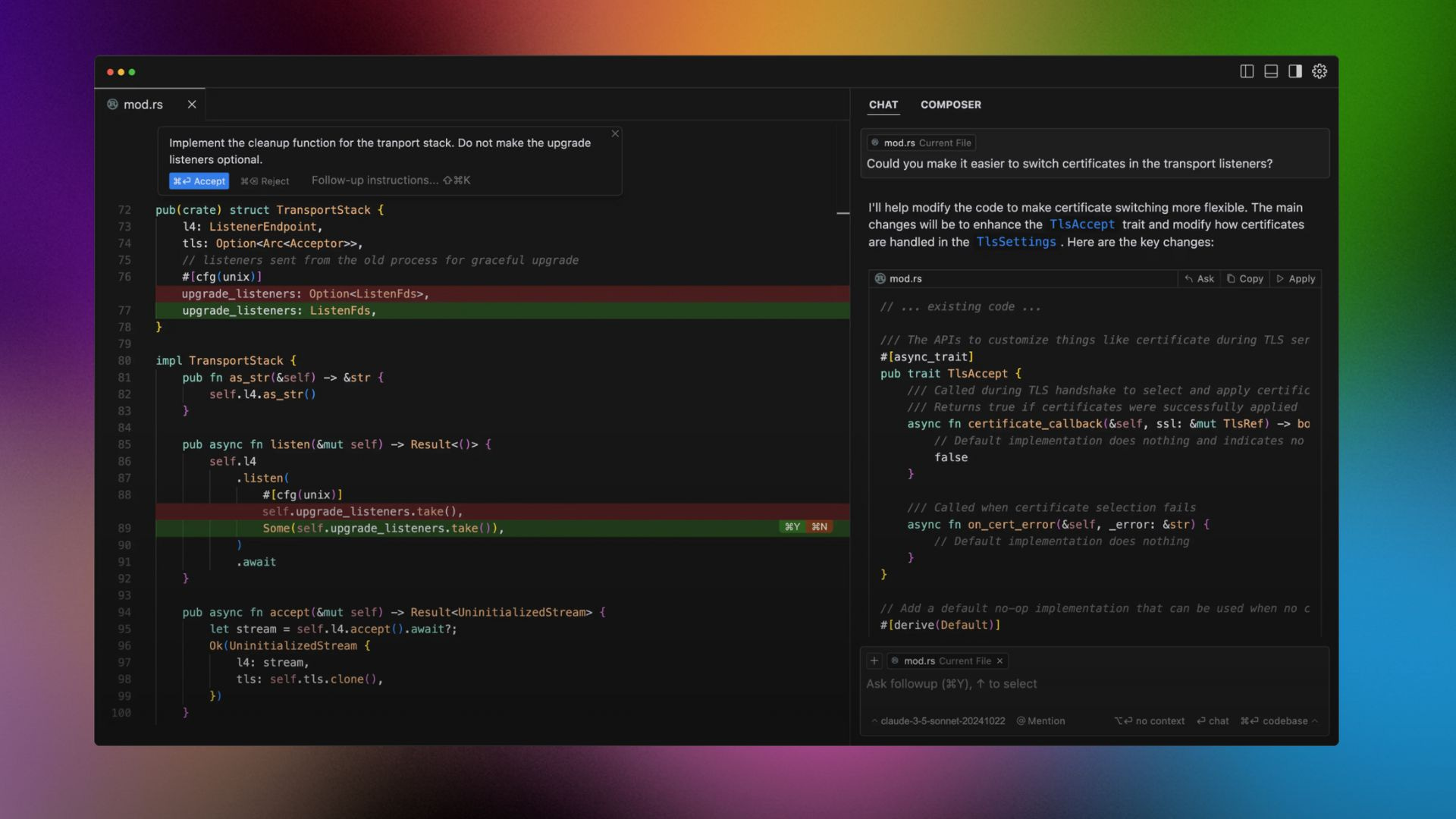

LLM Data Training — Code Review

Code review for LLM training pipelines in Python and Docker. Architecture review, best-practice guidance, and optimization for data loading and checkpointing.

RAG knowledge base — internal docs

RAG for B2B SaaS: document ingestion, embeddings, Pinecone indexing, and search API. Internal docs searchable with semantic retrieval and citation support.

REST API — partner integrations

Partner REST API with Flask and OpenAPI. Documentation, SDK examples, and runbooks. OAuth, rate limiting, and error handling for external integrations.

Full-stack dashboard — Flask + Tailwind

Flask + Tailwind dashboard for pipeline health and SLA metrics. Real-time status, alerting, and drill-down into job runs and data quality.

ELK stack — log search & alerts

ELK stack: Logstash, Elasticsearch, Kibana. Centralized log aggregation, retention policies, and alerting for production incident response.

ML feature pipeline — training & serving

ML pipeline: feature engineering, Airflow orchestration, model registry, and serving API. End-to-end workflow from raw data to model predictions in production.

Internal copilot — LLM + tools

Internal copilot that answers questions using company docs and runs approved tools (Jira, Slack). LLM backend, RAG over Confluence/Notion, function calling. Access controls and audit logging.